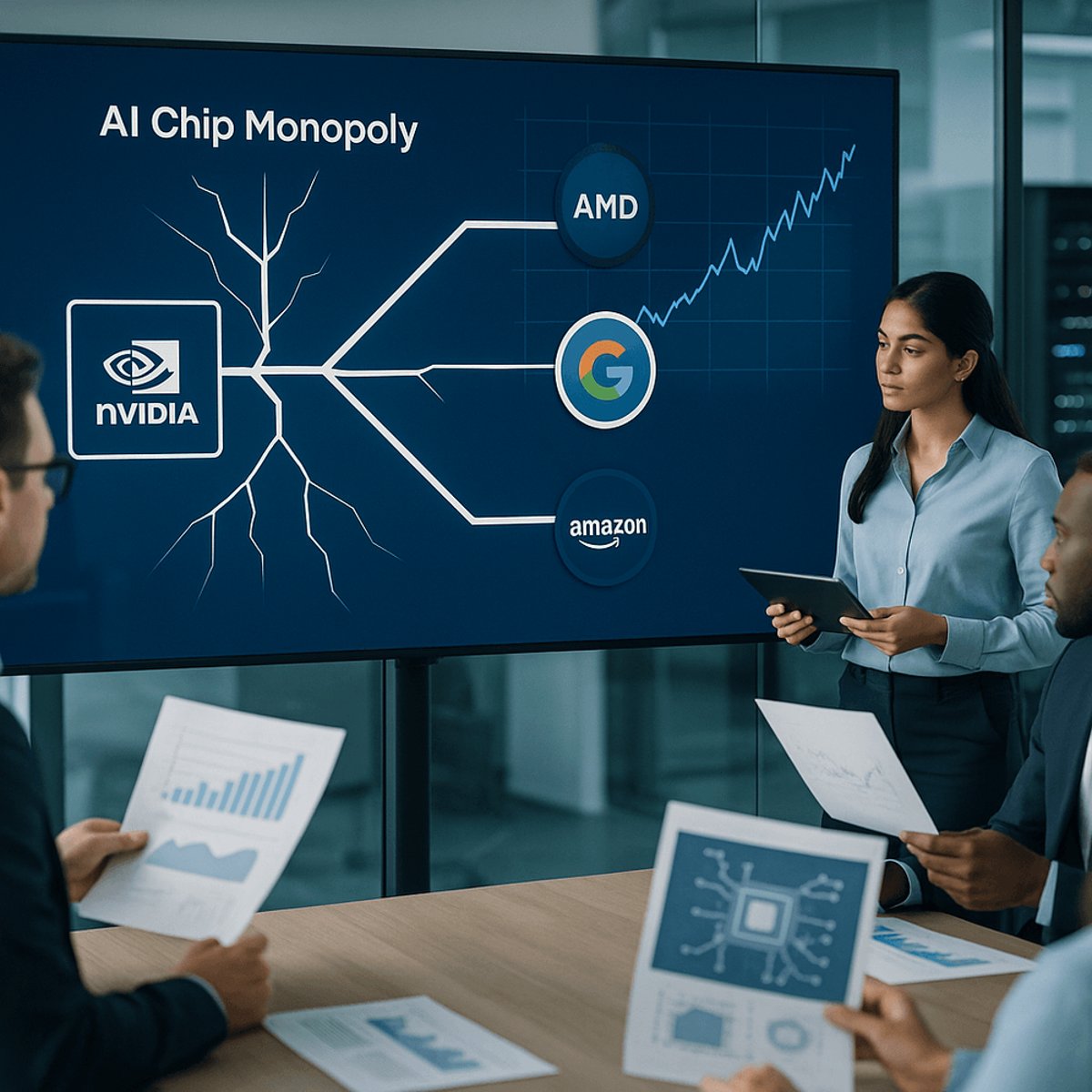

Nvidia just wrapped up one of its worst weeks in recent memory, not because the company's growth is slowing, but because Wall Street finally woke up to the chip giant's Achilles heel: customer diversification. Meta confirmed it's deploying AMD processors and reportedly eyeing Google silicon, while OpenAI is pivoting to Amazon chips for AI inference workloads. The message from Big Tech is clear - they're done putting all their eggs in Nvidia's basket, and investors are starting to sweat.

Nvidia has spent the past two years riding an AI gold rush that turned it into one of the world's most valuable companies. But this week, the cracks in its armor became impossible to ignore. The company's stock stumbled as investors absorbed a cascade of news that the AI chip market - once Nvidia's private playground - is rapidly becoming a multi-player game.

The most striking blow came from Meta, which confirmed it's actively deploying AMD MI300X accelerators across its data centers. According to reports from industry analysts, Meta's also in advanced discussions to integrate Google Tensor Processing Units into its AI infrastructure stack. For a company that's spent billions building out Nvidia-powered AI clusters, the pivot signals something bigger than simple cost optimization - it's a strategic hedge against supply constraints and vendor lock-in.

Then came the OpenAI revelation. The ChatGPT maker is reportedly shifting significant inference workloads to Amazon Web Services' proprietary Inferentia and Trainium chips. While Nvidia's H100 and H200 GPUs still dominate the training side of AI workloads, the inference market - where AI models actually run customer queries - is proving far more price-sensitive and competitive. Amazon's chips offer roughly 40% better price-performance for specific inference tasks, according to independent benchmarks.

Wall Street's reaction was swift and brutal. Nvidia shares slid throughout the week as analysts rushed to recalibrate their models. The concern isn't that Nvidia's growth is stalling - the company's data center revenue continues to surge, and demand for its latest Blackwell architecture remains insatiable. Instead, investors are grappling with margin compression and market share erosion as competitors chip away at different segments of the AI infrastructure stack.

The competitive landscape Nvidia faces today looks nothing like the one from 18 months ago. AMD has finally delivered credible alternatives with its MI300 series, closing the performance gap while undercutting on price. Google continues refining its TPUs, which now power not just internal workloads but increasingly attract external cloud customers. Amazon has emerged as the dark horse, leveraging its AWS distribution muscle to push custom silicon into enterprise accounts.

What makes this shift particularly dangerous for Nvidia is timing. The company's customers - Meta, Microsoft, Google, Amazon - collectively represent the bulk of AI infrastructure spending globally. These hyperscalers aren't just experimenting with alternatives; they're redesigning their entire chip procurement strategies around multi-vendor architectures. The goal is simple: never again face the supply crunches and pricing pressure that came with Nvidia's near-monopoly during the initial AI boom.

Industry insiders suggest the diversification trend will accelerate. Hyperscalers have invested billions in custom chip development, and they're determined to see returns on those bets. Meta alone has spent over two years building internal chip expertise, hiring semiconductor veterans from Nvidia, Intel, and Qualcomm. The company's recent job postings reveal aggressive expansion of its silicon design teams.

But Nvidia isn't standing still. The company's CUDA software ecosystem remains the gold standard for AI development, creating sticky customer relationships that go beyond raw hardware performance. Developers have spent years optimizing code for Nvidia architectures, and that installed base provides meaningful protection against competitive threats. The question is whether software lock-in can offset hardware commoditization.

Some analysts argue the market overreacted this week. Nvidia's revenue guidance for the current quarter remains robust, and the company continues shipping chips as fast as it can manufacture them. The AI infrastructure buildout is still in early innings, they contend, with plenty of growth to support multiple winners. Others counter that Nvidia's valuation always assumed durable competitive advantages - advantages that look increasingly temporary as customers diversify.

The shift also reflects broader changes in AI economics. As models mature and move from research to production, the cost structure changes dramatically. Training remains compute-intensive and performance-sensitive - Nvidia's sweet spot. But inference workloads, which generate the actual business value, prioritize efficiency over raw speed. That's where AMD's price advantage and Amazon's custom silicon find their opening.

OpenAI's decision to embrace Amazon chips is particularly telling. The company operates one of the world's largest AI inference deployments, serving hundreds of millions of ChatGPT queries daily. Even modest efficiency improvements translate to tens of millions in annual savings. When margins matter, loyalty becomes negotiable.

This week marks an inflection point in the AI chip wars. Nvidia's dominance isn't disappearing overnight, but the moat is narrowing as hyperscalers execute multi-year strategies to reduce dependency. For investors, the calculus has shifted from pure growth to sustainable competitive positioning. The AI infrastructure boom continues, but Nvidia now shares the stage with a cast of well-funded competitors armed with their own silicon and hungry customers looking for alternatives. Wall Street's repricing reflects not what's happening today, but what's inevitable tomorrow - a more balanced, more competitive AI chip ecosystem where even the king has to fight for every point of market share.