OpenAI's ChatGPT just failed a basic accuracy test. When WIRED asked the chatbot to cite the publication's own product recommendations for TVs, headphones, and laptops, it confidently delivered wrong answers across the board. The experiment, published April 1, exposes a critical vulnerability in how millions of consumers now rely on AI for shopping advice - and how often these systems simply make things up.

OpenAI's flagship chatbot just got caught making up product recommendations wholesale. In an investigation published by WIRED, reporter Reece Rogers asked ChatGPT a seemingly straightforward question: What products does WIRED's review team actually recommend? The results weren't just slightly off - they were completely fabricated.

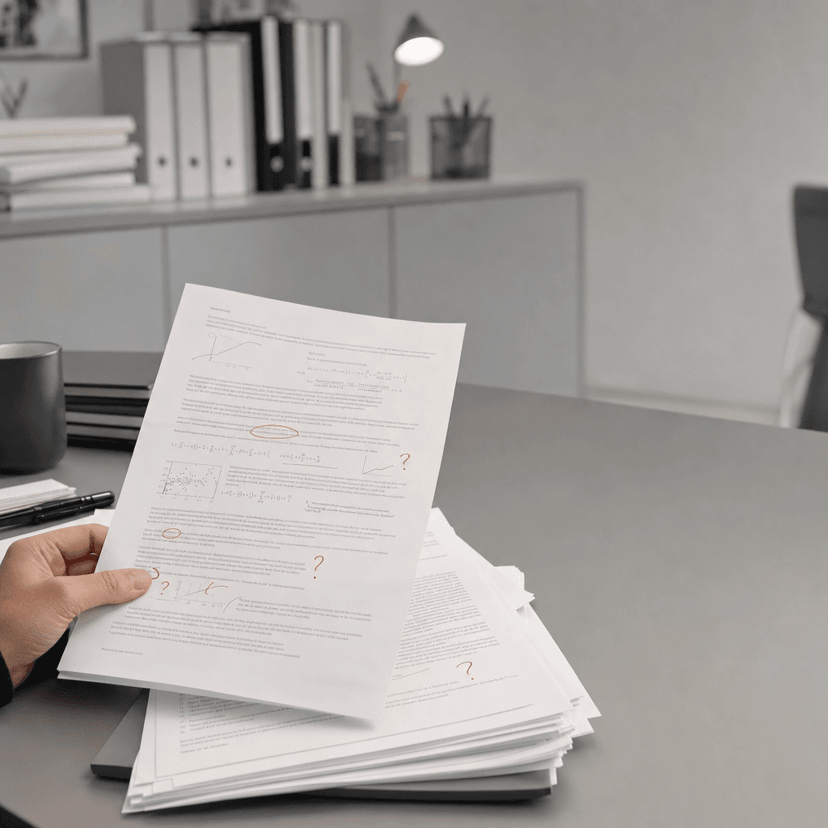

The AI confidently cited specific TVs, headphones, and laptops as WIRED's top picks, none of which the publication's reviewers had actually endorsed. It's a textbook case of AI hallucination, the industry term for when large language models generate plausible-sounding but entirely false information. And it's happening at scale as millions of shoppers turn to ChatGPT for buying advice.

This isn't an academic problem. Consumers are making real purchasing decisions based on ChatGPT's recommendations, often involving products costing hundreds or thousands of dollars. The bot speaks with such confidence that most users wouldn't think to fact-check its claims against the original sources. Rogers' experiment shows exactly why that's dangerous.

The timing couldn't be more awkward for OpenAI. The company has been pushing ChatGPT as a reliable research and shopping assistant, recently adding features that surface product recommendations and shopping links. But if the underlying model can't accurately cite what a publication actually recommends - even when that publication is well-indexed and widely available online - it raises serious questions about the technology's readiness for consumer advice.

This isn't the first time ChatGPT has been caught fabricating citations. Legal filings, academic references, and news sources have all been hallucinated by the model in previous tests. But product recommendations hit differently because they directly impact consumer spending and trust. When ChatGPT invents a legal case, lawyers can catch it. When it makes up a product review, everyday shoppers have no defense.

The hallucination problem stems from how large language models work. ChatGPT generates text by predicting what words should come next based on patterns in its training data, not by retrieving factual information from a database. It doesn't "know" what WIRED recommends - it generates plausible-sounding answers that match the pattern of product recommendations. Sometimes those match reality. Often, as Rogers discovered, they don't.

OpenAI has made reducing hallucinations a priority, implementing techniques like reinforcement learning from human feedback and adding retrieval-augmented generation capabilities. The company's GPT-4 model showed improvements over GPT-3.5 in factual accuracy. But clearly the problem persists, especially in domains like product reviews where the model needs to cite specific, verifiable claims.

Competitors face the same challenge. Google's AI Overview feature has been caught providing incorrect information in search results, while Meta's AI assistants have hallucinated biographical details. The difference is scale - ChatGPT's 200 million weekly users mean more people are potentially receiving false product advice than from any other AI system.

For publishers like WIRED, the situation creates a bizarre problem. Their reviews and recommendations, painstakingly researched and tested, are being overwritten in consumers' minds by an AI that confidently states the opposite. It's not just wrong - it actively undermines the value of original journalism and expert testing.

The experiment also reveals a deeper issue with how we're integrating AI into decision-making. ChatGPT provides no citations, no confidence scores, no indication that users should verify its claims. It simply states answers as fact, leaving users to assume the information is accurate. That design choice looks increasingly irresponsible as hallucination cases pile up.

Some AI shopping assistants are taking different approaches. Perplexity AI includes citations with every answer, allowing users to verify claims. Amazon's Rufus assistant only recommends products actually sold on Amazon's platform, reducing the hallucination risk. These guardrails matter when real money is on the line.

The stakes extend beyond individual purchase decisions. As AI chatbots become default research tools, hallucinated recommendations could reshape entire product markets. If ChatGPT consistently recommends non-existent or inferior products, it could distort consumer demand and reward manufacturers whose products happen to match the AI's invented preferences rather than actual quality.

Rogers' investigation crystallizes a problem the AI industry has been downplaying for months. Large language models aren't reliable sources of factual information, no matter how confidently they speak. Until OpenAI and its competitors solve hallucination - or at minimum add clear warnings when citing specific claims - consumers should treat ChatGPT's product advice the same way they'd treat a stranger's random opinion. It might be right, but you'd better check before spending your money on it. For now, when you want to know what WIRED's reviewers actually recommend, skip the chatbot and read the reviews yourself.