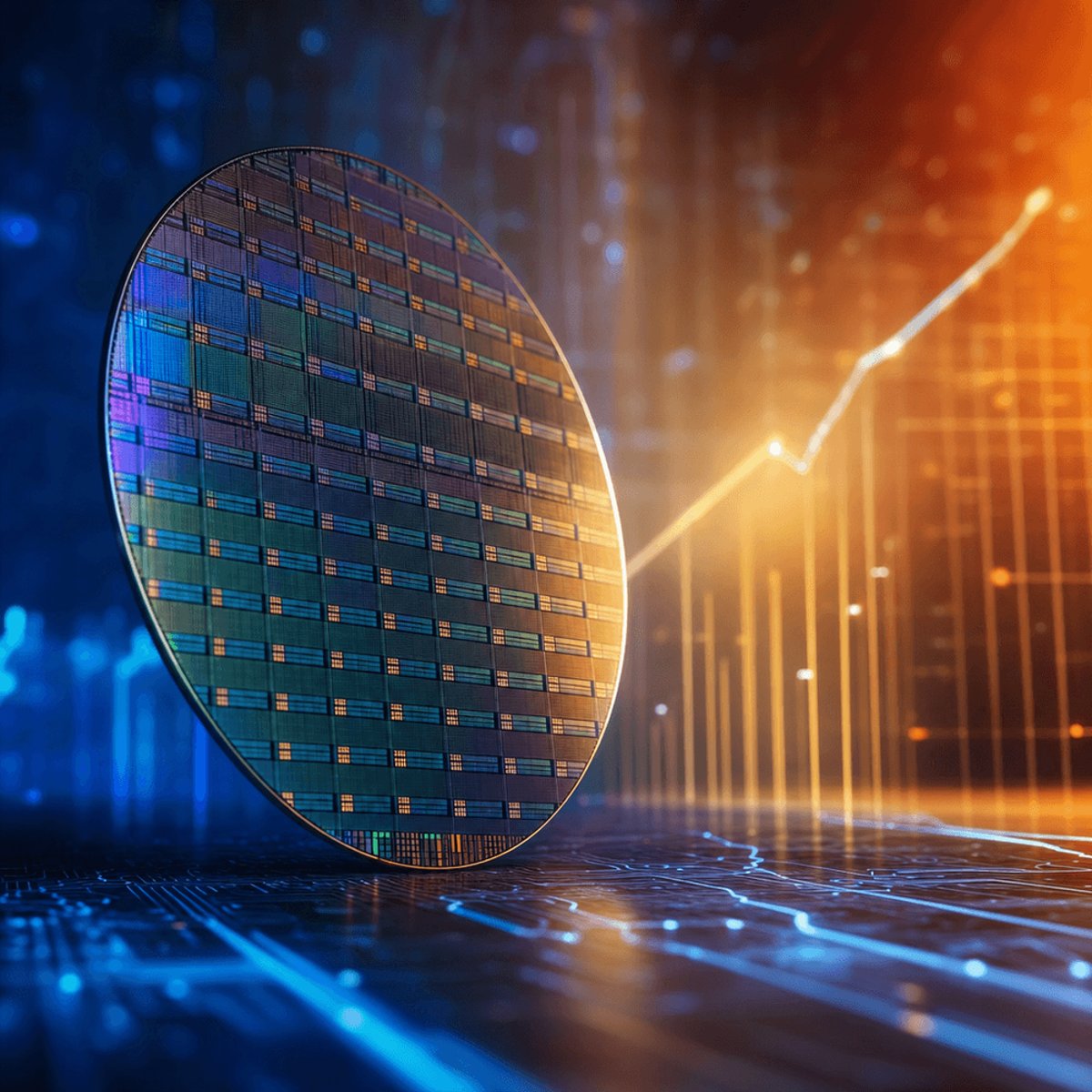

Cerebras just delivered one of the year's most explosive IPO debuts, with shares surging in their Wall Street premiere Thursday and sending a clear signal that investors see AI chip demand as far from saturated. The company, which makes wafer-scale processors designed to challenge Nvidia's data center dominance, represents the first major AI chip pure-play to go public since the generative AI boom took off. For an industry watching Nvidia command 80% market share and a $3 trillion valuation, Cerebras's reception suggests Wall Street believes there's room for credible alternatives.

Cerebras Systems made its long-awaited public debut Thursday, and Wall Street didn't hold back. The AI chip startup, which has spent years positioning itself as the architectural alternative to Nvidia's GPU empire, saw its shares pop in early trading as investors piled into what many see as a pure-play bet on AI infrastructure demand.

The timing couldn't be more telling. While Nvidia continues to print record earnings and dominate data center spending, Cerebras's warm reception suggests the market believes the AI chip opportunity is big enough for multiple winners. That's a departure from the winner-take-all dynamics that have defined semiconductor markets for decades.

Cerebras built its reputation on a radically different approach to AI computing. Instead of clustering thousands of small GPUs together like Nvidia's systems do, the company manufactures wafer-scale engines - single chips roughly the size of a dinner plate that contain 2.6 trillion transistors and 850,000 cores. The architectural bet is that eliminating the communication overhead between separate chips delivers faster training times and more efficient inference.

That pitch has resonated with a specific slice of the market. According to CNBC, Cerebras has landed contracts with AI research labs and enterprises looking for alternatives to Nvidia's ecosystem, particularly for large language model training where memory bandwidth becomes a critical bottleneck. The company's CS-3 system can handle models with up to 24 trillion parameters without the memory shuffling that slows down multi-GPU setups.

But going public also exposes Cerebras to scrutiny it's largely avoided as a private company. The firm faces questions about manufacturing economics - wafer-scale chips have notoriously low yields, meaning more defects per unit - and whether its specialized architecture can compete as Nvidia continues improving its own interconnect technologies like NVLink and NVSwitch. The company's reliance on TSMC for fabrication also puts it in direct competition for the same cutting-edge process nodes that Nvidia, Apple, and AMD are scrambling to secure.

What's undeniable is the market timing. Enterprise AI spending is running at unprecedented levels, with cloud providers building out massive training clusters and inference infrastructure. Microsoft, Google, and Amazon have all announced multi-billion-dollar AI capital expenditure plans, while AI-native companies like OpenAI and Anthropic are burning through compute capacity at staggering rates. That gold rush mentality is creating openings for alternatives.

Cerebras isn't the only company trying to crack Nvidia's dominance. Startups like Groq, SambaNova, and Graphcore have raised billions betting on specialized AI architectures, while Google continues investing heavily in its TPU program. But Cerebras is the first to face public market pressure to prove its model works at scale. The IPO gives the company capital to expand manufacturing and sales, but it also starts the clock on demonstrating sustainable revenue growth and a path to profitability.

The chip industry has seen this pattern before - promising architectures that struggle to overcome the ecosystem advantages of entrenched players. Nvidia's CUDA software platform, built over 15 years, creates powerful lock-in effects that make switching costly even when alternatives offer better raw performance. Cerebras will need to prove it can deliver not just faster chips but a complete stack that developers actually want to use.

For now, though, investors are betting on capacity constraints and architectural diversity. As AI workloads grow more complex and model sizes continue scaling, the argument goes, the industry will need multiple chip architectures optimized for different tasks. Cerebras's wafer-scale approach may carve out a defensible position in training and high-throughput inference, even if Nvidia maintains its lead in general-purpose AI acceleration.

Cerebras's IPO isn't just another tech company going public - it's a referendum on whether the AI chip market can support architectural diversity or if Nvidia's ecosystem advantages prove insurmountable. The strong debut suggests investors believe demand is intense enough that even niche players with differentiated technology can build meaningful businesses. But the hard part starts now. Cerebras needs to prove it can scale manufacturing, expand beyond early adopters, and deliver returns in a market where Nvidia's lead only seems to grow stronger. The next few quarters will reveal whether Thursday's enthusiasm was justified or if the AI chip market remains a one-company show with expensive supporting acts.