Google Cloud's AI leadership just laid out the competitive landscape that's reshaping enterprise artificial intelligence. In an exclusive interview with TechCrunch, the company's Cloud AI team revealed they're racing against three simultaneous frontiers: raw intelligence, response time, and what they're calling "extensibility"—the ability to adapt models to specialized tasks. It's a framework that reveals how Google is thinking about AI competition beyond the headline-grabbing benchmarks.

Google is reframing the AI arms race. While competitors fixate on benchmark scores and parameter counts, the search giant's Cloud AI division is mapping a more nuanced battleground—one where speed and adaptability matter just as much as raw capability.

The strategic perspective emerged from Google Cloud's AI leadership in an exclusive conversation with TechCrunch, offering rare insight into how one of the industry's major players is thinking about the next phase of AI development. It's not just about building smarter models anymore. It's about building models that work faster, adapt better, and integrate seamlessly into enterprise workflows.

The first frontier—raw intelligence—is the one everyone already knows. It's the dimension that fuels headlines every time OpenAI or Anthropic drops a new model. But Google's framing suggests this is just one axis of competition, and possibly not even the most important one for enterprise customers.

Response time represents the second battlefield. As AI models balloon in size and complexity, inference speed becomes critical. A model that takes 30 seconds to respond might ace every benchmark but fail in real-world applications where users expect instant results. Google's emphasis here aligns with broader industry moves toward smaller, faster models—think Meta's Llama deployments or Microsoft's Phi series.

But it's the third frontier that reveals Google's strategic positioning. "Extensibility"—the ability to customize and adapt models for specific enterprise use cases—speaks directly to where the real money flows in AI. Generic chatbots grab consumer attention, but enterprises need models that understand their data, their workflows, and their compliance requirements.

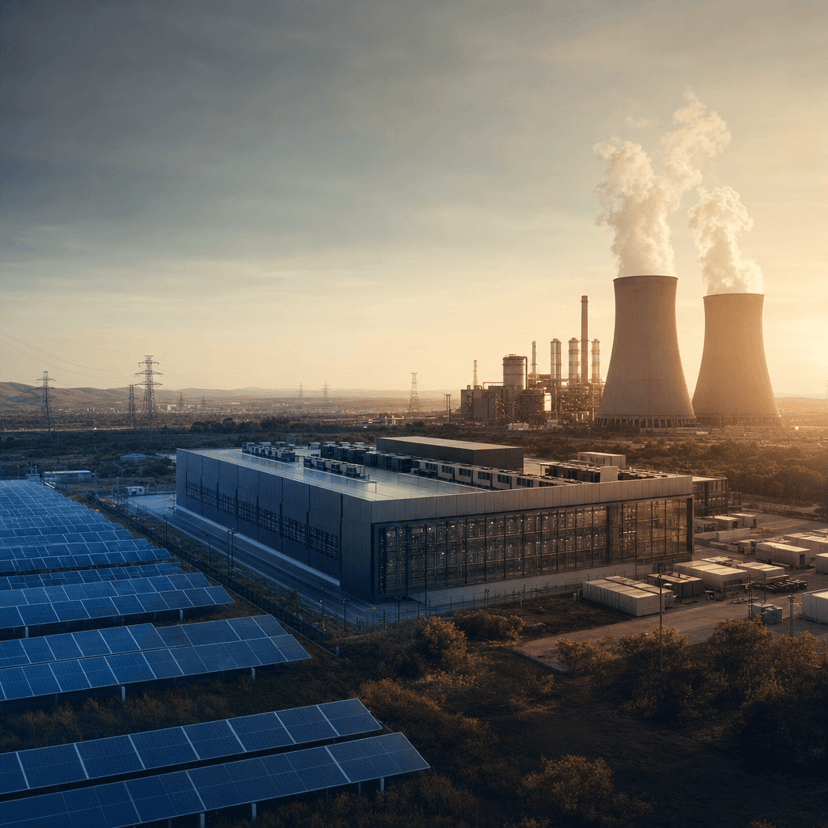

This framework lands as Google Cloud battles Microsoft Azure and Amazon Web Services for enterprise AI dominance. Microsoft secured early advantage through its OpenAI partnership, while Amazon is pushing Bedrock as a model-agnostic platform. Google's response appears to be positioning Vertex AI as the platform that excels across all three dimensions simultaneously.

The extensibility focus also differentiates Google's approach from consumer-focused AI labs. While OpenAI optimizes for general-purpose assistance and viral demos, Google Cloud is betting that enterprises care more about fine-tuning capabilities, integration options, and deployment flexibility. It's a bet backed by Google's infrastructure advantages—the company runs one of the world's largest cloud platforms and has deep expertise in serving models at scale.

The timing is strategic. As the AI industry matures beyond the initial GPT moment, differentiation becomes crucial. Raw capability improvements are slowing—the gap between leading models has narrowed considerably over the past year. Speed optimizations face physical limits. But extensibility remains wide open, especially as enterprises move from experimentation to production deployments.

Google's three-frontier framework also hints at internal priorities. The company has shipped multiple model families recently—Gemini for general use, specialized variants for code and multimodal tasks. This portfolio approach makes more sense when viewed through the extensibility lens: different models for different enterprise needs, all accessible through a unified platform.

Competitors are watching. Microsoft already offers extensive fine-tuning options through Azure, while Amazon built Bedrock specifically around model choice and customization. OpenAI has started emphasizing its API's flexibility and custom model programs. The race Google is describing isn't hypothetical—it's already underway.

For enterprises making AI infrastructure decisions, this framing offers a useful evaluation rubric. Instead of picking the model with the highest benchmark score, consider: Does it respond fast enough for your use case? Can you adapt it to your specific domain? Does the platform support the kind of extensibility you'll need as requirements evolve?

Google Cloud's willingness to articulate this framework publicly also signals confidence. The company believes it can compete effectively across all three dimensions, not just cherry-pick favorable metrics. Whether that confidence is justified will play out in enterprise contract wins over the coming quarters.

The three-frontier framework reveals how AI competition is evolving from a one-dimensional race to a multi-faceted strategic challenge. As enterprises move from pilots to production, Google's bet on extensibility alongside intelligence and speed could define which cloud platforms win the next wave of AI deployments. The companies that master all three dimensions—not just one—will capture the enterprise contracts that actually generate sustainable revenue. For now, Google Cloud is making its case that it's building for that more complex reality, even as competitors refine their own approaches to the same fundamental challenges.