Microsoft just dropped its first homegrown AI models, MAI-Voice-1 and MAI-1-preview, marking a significant strategic shift as the $10 billion OpenAI partner begins developing competing technology in-house. The move signals Microsoft's intent to reduce dependence on external AI providers while leveraging its vast consumer data advantages.

Microsoft just fired the first shot in what could reshape the AI landscape entirely. The tech giant announced its first homegrown AI models Thursday, MAI-Voice-1 and MAI-1-preview, in a move that puts it in direct competition with OpenAI — the same company Microsoft has invested over $10 billion in since 2019. The announcement comes as Microsoft seeks to reduce its dependence on external AI providers while capitalizing on its unique data advantages.

The MAI-Voice-1 speech model represents a technical breakthrough, capable of generating a full minute of audio in under one second using just a single GPU. This efficiency gain could prove crucial as voice AI applications scale across Microsoft's ecosystem. The company is already deploying MAI-Voice-1 to power Copilot Daily, where an AI host delivers personalized news briefings, and to create podcast-style discussions that help users understand complex topics.

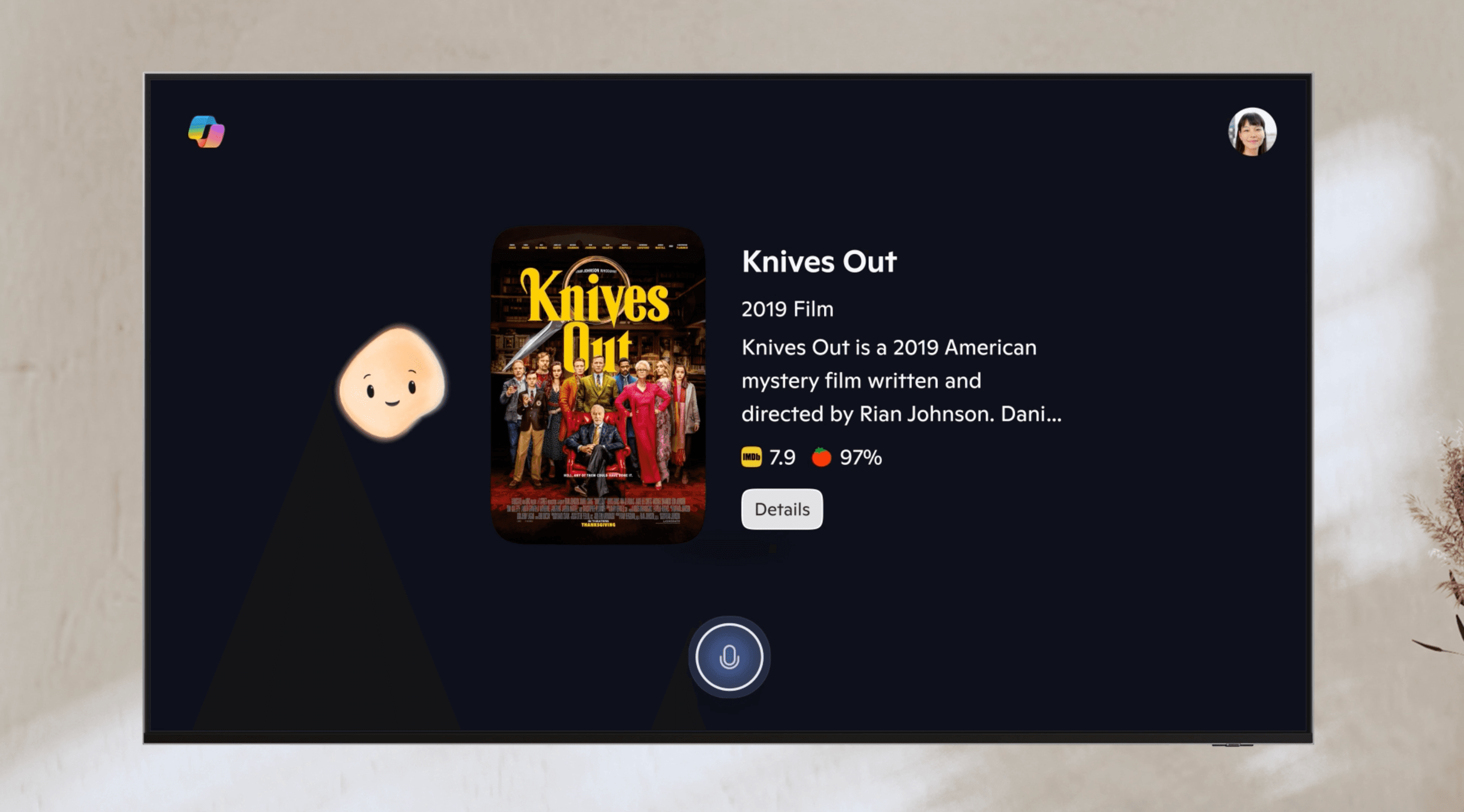

Users can experiment with MAI-Voice-1 directly through Copilot Labs, where they can input text, select different voices, and adjust speaking styles. This consumer-facing approach aligns with Microsoft AI chief Mustafa Suleyman's strategic vision, as he told The Verge's Decoder podcast last year that internal models prioritize consumer applications over enterprise use cases.

"My logic is that we have to create something that works extremely well for the consumer and really optimize for our use case," Suleyman explained during the interview. "So, we have vast amounts of very predictive and very useful data on the ad side, on consumer telemetry, and so on. My focus is on building models that really work for the consumer companion."

The second model, MAI-1-preview, required massive computational resources — approximately 15,000 Nvidia H100 GPUs for training. This investment underscores Microsoft's commitment to developing competitive large language models that can handle instruction-following and everyday query processing. The company describes MAI-1-preview as offering "a glimpse of future offerings inside Copilot," suggesting broader integration plans ahead.