The Pentagon just dropped the hammer on Anthropic, formally designating the AI startup as a supply chain risk and barring defense contractors from using its Claude models. The unprecedented move comes as evidence emerges that Claude is being used inside Iran, marking a dramatic escalation in Washington's scrutiny of AI companies' global reach. Defense vendors will now need to certify they're not using Anthropic's technology, effectively locking the company out of billions in government contracts while rivals like OpenAI gain ground.

The Department of Defense just made it official: Anthropic is now considered a supply chain risk, a designation that will ripple through the entire defense-industrial complex and reshape how AI gets deployed in national security contexts.

According to the formal declaration reported by CNBC, defense vendors and contractors working with the Pentagon will be required to certify that they're not using Anthropic's models in any capacity. It's a stunning rebuke for a company that's raised over $7 billion from investors including Google and Salesforce Ventures, and it effectively slams the door on what could have been a massive revenue stream.

The timing tells the story. The designation comes as intelligence has surfaced showing Claude, Anthropic's flagship AI assistant, is being actively used inside Iran. While the exact nature and scale of that usage remains unclear, the mere presence of advanced AI capabilities in a sanctioned adversarial nation was apparently enough to trigger the Pentagon's risk calculus. It's the kind of scenario that keeps national security officials up at night: cutting-edge AI technology, developed with American capital and expertise, potentially supporting activities contrary to U.S. interests.

This isn't just bureaucratic box-checking. The supply chain risk designation carries real teeth. Defense contractors, from prime integrators like Lockheed Martin and Northrop Grumman down to smaller specialized vendors, will need to audit their AI toolchains and verify they're Anthropic-free. That's no small task in an era where AI models get embedded in everything from logistics software to intelligence analysis tools. The certification requirement essentially creates a compliance minefield that most contractors will navigate by simply avoiding Anthropic entirely.

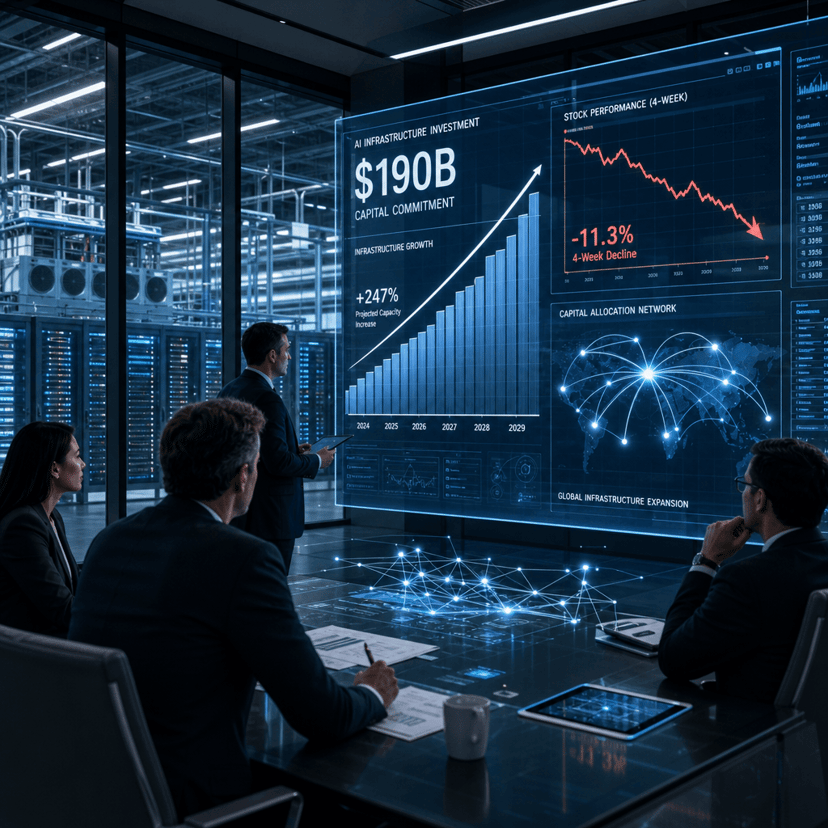

The move hands a significant competitive advantage to OpenAI, which has been actively courting government contracts and recently launched a dedicated government services division. While OpenAI has faced its own scrutiny over China-linked investment discussions, it hasn't triggered the kind of formal exclusion that Anthropic now faces. The company's GPT-4 and upcoming models remain viable options for defense applications, and contractors spooked by the Anthropic designation will likely default to OpenAI's offerings.

For Anthropic, the financial implications are staggering. The defense sector represents not just direct government contracts but an entire ecosystem of contractors, subcontractors, and vendors who touch classified or sensitive work. Getting blacklisted from that ecosystem cuts off access to customers who often pay premium prices and offer long-term, stable revenue. It's exactly the kind of enterprise business that AI companies need to justify their massive valuations.

The Iran connection adds a troubling dimension that goes beyond typical export control concerns. Unlike software that requires explicit installation or licensing, large language models can be accessed through various means - official APIs, mirror sites, or even locally-run versions if the weights have leaked. Anthropic has positioned itself as a safety-focused AI company, but controlling where and how Claude gets used once it's released into the world is proving to be an intractable problem. The same accessibility that makes AI useful also makes it nearly impossible to contain geographically.

This designation also signals a broader shift in how Washington thinks about AI and national security. Rather than waiting for specific incidents or breaches, the Pentagon is taking a preemptive stance based on observed usage patterns and potential risks. It's a departure from traditional cybersecurity approaches and suggests that AI companies' global footprints will face increasing scrutiny. If your model shows up in the wrong places, even without your explicit authorization, you might find yourself frozen out of government business.

The enterprise AI market is watching closely. If the Pentagon's risk assessment spreads to other agencies or influences private sector procurement policies, Anthropic could face a broader isolation. Industries like healthcare, finance, and critical infrastructure that have security-conscious procurement processes might adopt similar restrictions. What starts as a defense issue could metastasize into a wider market access problem.

Anthropic hasn't issued a public response to the designation, and the company's leadership has been notably quiet as this situation has developed. That silence is telling. There's no obvious playbook for getting removed from a supply chain risk list, especially when the underlying issue involves geopolitical adversaries accessing your technology through channels you don't control.

The Pentagon's formal designation of Anthropic as a supply chain risk represents a watershed moment for the AI industry. It's the first time a major AI company has been explicitly barred from the defense ecosystem, and it won't be the last. As AI models proliferate globally and show up in unexpected places, companies will face impossible choices between open accessibility and controlled deployment. For Anthropic, the immediate challenge is salvaging what's left of its enterprise credibility while watching OpenAI and others fill the vacuum in government contracts. The broader lesson for the industry: in the age of AI, where your technology ends up matters as much as what it can do, and companies that can't control their models' geographic footprint will pay a steep price.