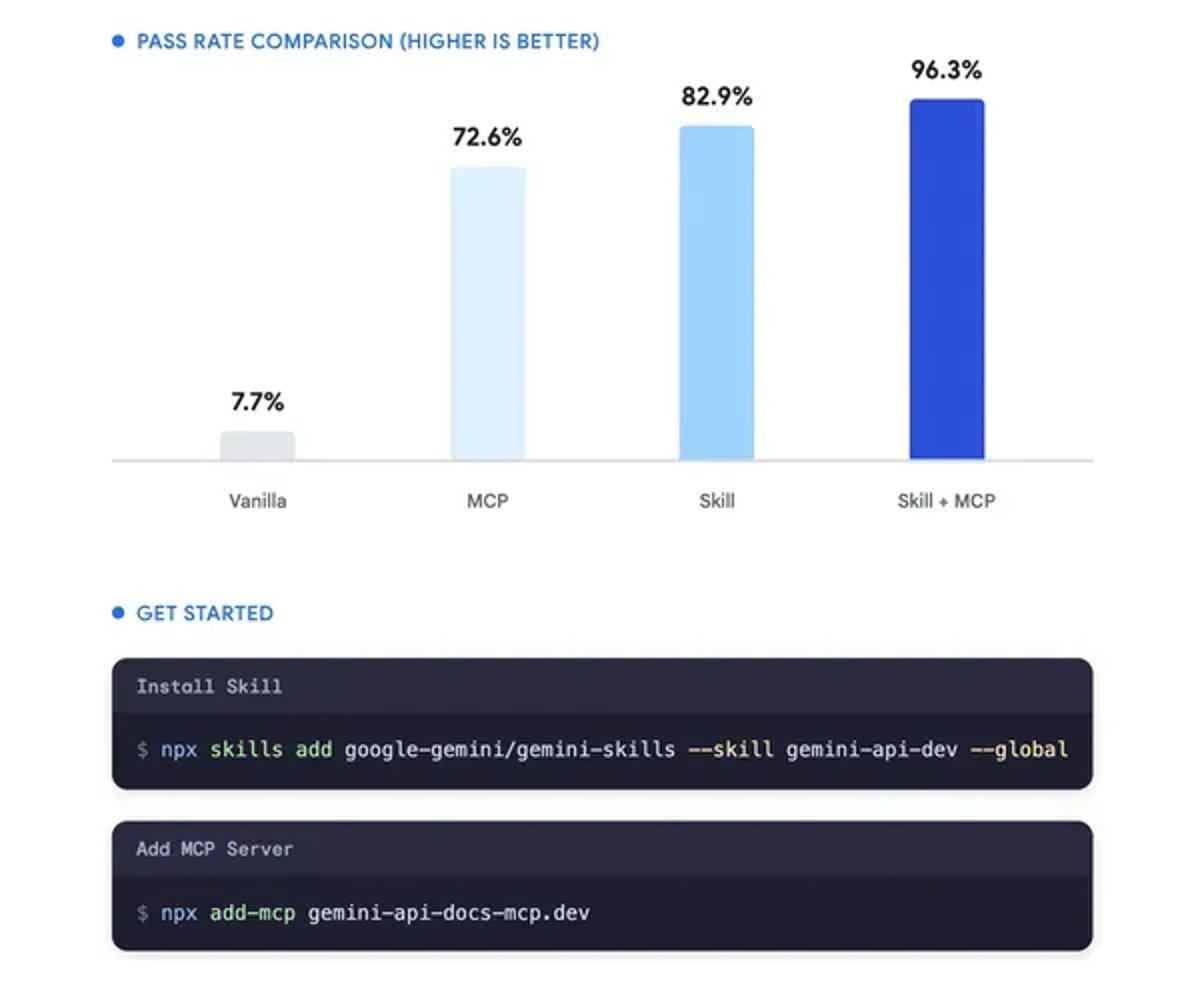

Google DeepMind just shipped two developer tools designed to fix a persistent problem plaguing AI coding agents: outdated API knowledge. The company's new Gemini API Docs MCP and Agent Skills aim to keep coding assistants current with Google's rapidly evolving Gemini API, addressing the training data cutoff issue that causes agents to generate deprecated code. For developers building on Gemini, it's a pragmatic fix to a friction point that's slowed AI-assisted development.

Google DeepMind is rolling out two complementary developer tools that tackle one of the more frustrating aspects of working with AI coding agents - their tendency to hallucinate outdated API code. Product Manager Trey Nguyen announced the Gemini API Docs MCP and Agent Skills on Wednesday, acknowledging what developers have been grumbling about for months: agents trained on stale data keep suggesting deprecated methods.

The problem isn't subtle. AI coding assistants operate with knowledge frozen at their training cutoff date, which means they confidently recommend API patterns that Google may have sunset weeks or months ago. For teams building production systems on the Gemini API, that creates a debugging tax - time spent hunting down why perfectly logical-looking code throws errors.

The MCP integration addresses this head-on by implementing the Model Context Protocol, an emerging standard for connecting AI systems to live data sources. Instead of relying on baked-in training knowledge, coding agents can now query current Gemini API documentation in real-time. It's the difference between consulting a textbook from last semester and pulling up the latest docs.

Agent Skills works as the companion piece, providing structured capabilities that coding agents can invoke when working with Gemini APIs. Think of it as giving the agent a toolkit specifically optimized for Google's AI infrastructure, rather than making it improvise based on general programming knowledge.

The timing isn't coincidental. Google has been pushing hard into the enterprise AI market, competing directly with OpenAI, Microsoft, and Anthropic for developer mindshare. But winning that battle requires more than raw model performance - it demands a friction-free developer experience. Tools that generate broken code don't inspire confidence in production deployments.

What's interesting here is Google's embrace of MCP, a protocol that Anthropic has been championing. Rather than building a proprietary solution, DeepMind is betting on an open standard that could work across multiple AI platforms. That interoperability play suggests Google sees the bigger picture - developers want tools that integrate cleanly, not another walled garden.

The immediate impact will likely be felt by teams already deep in the Gemini ecosystem. If you're building AI features using Google's models, these tools should reduce the frustration of agents suggesting outdated patterns. But the broader signal is more significant: major AI labs are starting to treat developer tooling as seriously as model capabilities.

For the wider AI coding agent market, this sets a precedent. Microsoft's GitHub Copilot, Cursor, and other AI-assisted development tools all face similar staleness challenges. Google's solution - live documentation access via standardized protocols - could become table stakes.

The announcement arrives as enterprises are moving from AI experimentation to production deployment. Tools that seemed good enough for prototyping suddenly become blockers when reliability matters. Google is clearly trying to position Gemini as the enterprise-ready choice, and that means sweating details like API version accuracy.

What remains unclear is adoption velocity. Developer tools live or die based on how seamlessly they integrate into existing workflows. If the MCP implementation requires significant setup overhead, many teams might skip it. But if it just works with popular coding agents out of the box, it could quickly become expected infrastructure.

The release also highlights how fast the AI development tooling landscape is evolving. Just a year ago, the main complaint about coding agents was hallucination in general. Now we're at the stage where the problems are specific enough - like API version accuracy - that targeted solutions make sense. That's a sign of market maturation.

Google's move to fix AI coding agent accuracy isn't flashy, but it's strategically smart. By addressing a specific pain point that enterprise developers actually face - outdated API suggestions - DeepMind is building the kind of trust that wins production deployments. The embrace of open standards like MCP suggests Google understands that developer lock-in through proprietary tools is a losing strategy. Whether these tools gain traction will depend on execution, but the direction is clear: AI model performance alone won't win the enterprise. Reliable, friction-free tooling will.