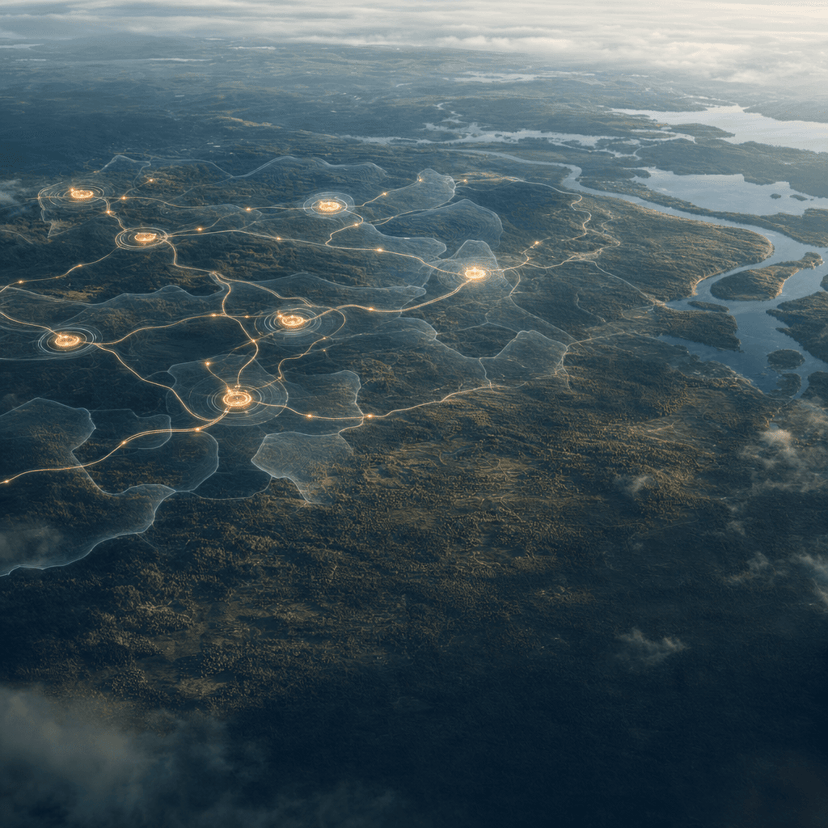

Elon Musk's audacious plan to build AI data centers in orbit is quickly moving from sci-fi concept to regulatory reality. Following SpaceX's FCC filing for a million-satellite network last week and this week's formal merger with xAI, the billionaire is now publicly defending the economics of space-based computing. With FCC approval looking likely and a 2028 timeline on the table, the question isn't whether Musk is serious - it's whether the math actually works.

SpaceX just turned what seemed like an elaborate thought experiment into something much more concrete. When the company filed plans with the FCC last Friday for a million-satellite data center constellation, the tech world raised a collective eyebrow. A week later, the pieces are falling into place in a way that suggests Musk isn't joking around.

The formal merger between SpaceX and xAI went through on Monday, according to TechCrunch reporting. The move officially links Musk's rocket company with his AI venture in a marriage that makes far more sense if you're planning to launch computing infrastructure into orbit. It's the kind of vertical integration that would make even Jeff Bezos jealous.

But the real signal that this is more than Musk's usual Twitter bravado came Wednesday, when the FCC accepted the filing and FCC Chairman Brendan Carr took the unusual step of publicly endorsing it on X. Throughout his tenure, Carr has made it clear which side he's on when it comes to the Trump administration's favored entrepreneurs. As long as Musk maintains his position in the inner circle, regulatory hurdles look more like speed bumps.

The economics, though, are where things get interesting - and contentious. On a new episode of the "Cheeky Pint" podcast hosted by Stripe co-founder Patrick Collison, Musk laid out his case for why computing should migrate to space. The core argument hinges on energy costs, which represent one of the biggest operational expenses for AI data centers.

"It's harder to scale on the ground than it is to scale in space," Musk told Collison and guest Dwarkesh Patel. "Any given solar panel is going to give you about five times more power in space than on the ground, so it's actually much cheaper to do in space."

The solar efficiency claim is technically accurate - without atmospheric interference and with 24/7 sun exposure, orbital solar panels do generate significantly more power. But Patel pushed back on the logical leap from "more power per panel" to "cheaper overall operation." Power is just one line item in data center economics, and solar is just one way to generate it. The cost of launching hardware into orbit, maintaining it in the harsh space environment, and replacing failed components might dwarf any energy savings.

The GPU servicing problem is particularly thorny. When graphics processing units fail during AI model training - which happens regularly in massive compute clusters - ground-based data centers can swap them out in hours. In orbit, you're looking at a fundamentally different maintenance equation, one that Musk didn't fully address on the podcast.

None of this has dampened Musk's enthusiasm or his timeline. He's marking 2028 as the inflection point when orbital data centers become economically superior to terrestrial ones. "You can mark my words, in 36 months but probably closer to 30 months, the most economically compelling place to put AI will be space," he said.

Then he went even bigger. "Five years from now, my prediction is we will launch and be operating every year more AI in space than the cumulative total on Earth." For context, global data center capacity is projected to reach 200 gigawatts by 2030, representing roughly $1 trillion in infrastructure value. Musk is essentially claiming he'll match - and exceed - that entire market within five years, but in orbit.

The timing is hardly coincidental. SpaceX makes its revenue launching payloads into space, and now it conveniently has an AI subsidiary that could become its own best customer. With the newly merged SpaceX-xAI entity heading toward an IPO in the coming months, expect orbital data centers to feature prominently in investor pitches.

The broader AI infrastructure race provides context for why this matters beyond Musk's empire-building. Tech giants are pouring hundreds of billions annually into data center expansion, constrained by power availability, cooling costs, and real estate. If orbital computing proves even marginally viable, it represents a genuine paradigm shift - and a massive new revenue stream for whoever controls the launch infrastructure.

Skeptics will note that Musk's predictions have a mixed track record. Full self-driving has been perpetually "next year" for nearly a decade. But SpaceX has also achieved things - like routine rocket reusability - that the aerospace establishment dismissed as impossible. The company's Starlink network already operates thousands of satellites successfully, proving SpaceX can manage orbital infrastructure at scale.

The regulatory fast-track from Carr's FCC suggests Musk won't face serious governmental resistance, at least not domestically. The technical and economic questions remain wide open, but with the merger complete and regulatory approval looking assured, we're about to find out whether the physics and finances of space-based AI computing actually pencil out.

What started as an eyebrow-raising FCC filing is rapidly crystallizing into a concrete plan with regulatory backing, capital support, and a hard timeline. Whether Musk can actually make the economics work remains the trillion-dollar question - literally. But between the SpaceX-xAI merger, FCC fast-tracking, and Musk's public commitment to a 2028 timeline, orbital AI computing has moved from thought experiment to something the industry needs to take seriously. The next 30 months will reveal whether this is visionary infrastructure planning or the most expensive bet in tech history. Either way, the data center industry just got a lot more interesting.