The Pentagon found a workaround. Sources tell Wired that the Defense Department tested OpenAI's technology through Microsoft's Azure cloud platform while the AI company still banned military applications, raising serious questions about policy enforcement and corporate oversight in the rush to deploy AI. The revelation comes as OpenAI now openly courts defense contracts, but the timing suggests the military was experimenting with ChatGPT's underlying models well before the company's January 2024 policy reversal.

OpenAI built its brand on responsible AI development. The company's use policy explicitly banned military applications for years. But according to sources speaking to Wired, the Pentagon was already testing the company's models through a convenient loophole: Microsoft.

The Defense Department allegedly experimented with Microsoft's Azure-hosted version of OpenAI technology while the ChatGPT maker's military prohibition remained in place. It's a classic case of policy arbitrage - what OpenAI banned directly, the military apparently accessed indirectly through the company's biggest investor and deployment partner.

Microsoft has poured $13 billion into OpenAI since 2019, securing exclusive cloud hosting rights and enterprise distribution through Azure. That arrangement gave the tech giant its own licensing terms for OpenAI models, terms that didn't necessarily mirror OpenAI's consumer-facing restrictions. For the Pentagon, that gap proved useful.

The allegations paint a messy picture of AI governance in practice. OpenAI publicly maintained its military use ban until January 2024, when the company quietly updated its usage policies to allow defense applications. CEO Sam Altman later confirmed the shift, stating that OpenAI would work with the U.S. government on cybersecurity and other national security projects.

But if sources are accurate, the Pentagon wasn't waiting for permission. The Defense Department was already running experiments through Microsoft's enterprise channels, testing how large language models could handle military-relevant tasks. The exact nature of those tests remains unclear, but the mere fact of their existence before OpenAI's policy change raises uncomfortable questions.

This isn't just about one company's rules. It's about what happens when cutting-edge AI gets distributed through complex corporate partnerships. OpenAI builds the models. Microsoft hosts and sells them. Enterprise customers, including government agencies, access them through Azure. At each step, different terms of service apply, different oversight mechanisms kick in, and different interpretations of acceptable use emerge.

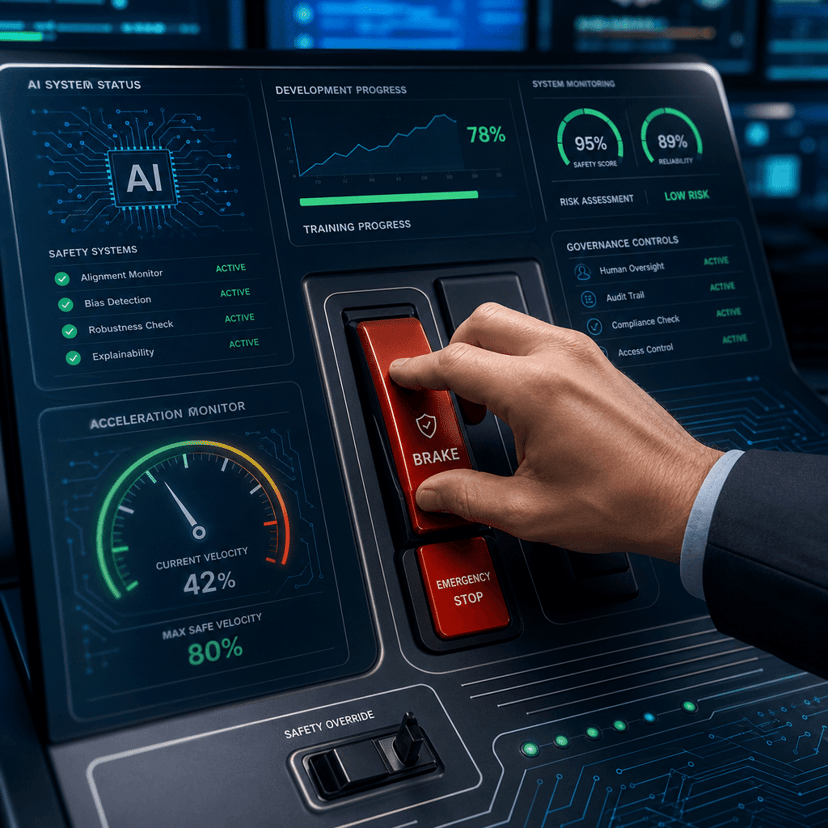

The episode also highlights the tension between AI safety rhetoric and commercial reality. OpenAI positioned itself as more cautious than competitors, someone willing to restrict powerful technology even when others wouldn't. That narrative helped the company attract talent, secure favorable press coverage, and build trust with policymakers concerned about AI risks.

But if the military was already using OpenAI models through Microsoft, that cautious image looks more like marketing than meaningful constraint. The technology flowed to defense applications regardless of OpenAI's stated policy, just through a different corporate channel.

Microsoft has long maintained separate enterprise agreements that give large customers broad deployment rights for Azure AI services. Those agreements predate much of the current debate over military AI use and weren't written with OpenAI's specific ethical guidelines in mind. When Microsoft integrated GPT-4 and other OpenAI models into Azure, customers with existing enterprise licenses gained access under their original terms.

For the Pentagon, that meant OpenAI technology without OpenAI's restrictions. Defense officials didn't need to negotiate directly with the startup or convince its leadership that military applications aligned with the company's mission. They just needed an Azure contract, something the Defense Department already had for countless other cloud services.

The timing of OpenAI's policy change now looks different through this lens. In January 2024, the company announced it would support certain government defense projects, framing the shift as a thoughtful evolution of its approach. But if the Pentagon was already experimenting through Microsoft, OpenAI was essentially catching its policy up to reality rather than charting new territory.

Since officially opening the door to defense work, OpenAI has pursued military contracts more aggressively. The company joined other AI firms in pitching the Pentagon on everything from intelligence analysis to logistics optimization. That's a dramatic shift from the company's earlier stance, when military applications seemed fundamentally at odds with its safety-focused mission.

The Wired report doesn't detail exactly how the Pentagon used OpenAI models or whether Microsoft knew the technology would support defense applications. But it doesn't need to. The story's power comes from what it reveals about AI policy enforcement in an era of complex corporate partnerships and cloud-based distribution.

What does it mean to ban military use when your technology is embedded in enterprise platforms with millions of users and hundreds of use cases? How do you enforce restrictions when third-party distributors operate under different rules? And who's actually responsible when stated policies don't match actual deployment patterns?

The Pentagon's alleged end-run around OpenAI's military ban exposes a fundamental challenge in governing AI deployment. As models move from research labs to enterprise clouds to end users, control gets harder and stated policies matter less. OpenAI may have banned military use on paper, but the technology still reached defense applications through Microsoft's distribution channels. That's not a loophole to close but a reality to acknowledge: in the age of cloud AI, the line between what companies allow and what actually happens gets blurrier with each partnership. For OpenAI, the episode suggests its cautious reputation was always more fragile than it seemed, dependent on everyone in the distribution chain honoring restrictions the company itself couldn't directly enforce.