ScaleOps just closed a $130 million Series C to tackle one of AI's most expensive problems: wasted cloud infrastructure. As companies race to deploy AI models, they're burning through GPUs and cloud budgets at unprecedented rates. The Tel Aviv-based startup promises to cut that waste by automating Kubernetes infrastructure in real time, a pitch that's resonating as enterprises watch their AI bills spiral out of control.

ScaleOps just pulled off a massive $130 million Series C, betting that the solution to AI's GPU crisis isn't just buying more chips - it's using the ones we have a whole lot smarter.

The funding comes as enterprises face a brutal reality: AI models are expensive to run, GPUs remain scarce, and cloud bills are exploding faster than finance teams can approve them. According to TechCrunch, ScaleOps is positioning itself as the antidote to this infrastructure chaos with automation that optimizes compute resources in real time.

The timing couldn't be better. While companies like Nvidia can't manufacture GPUs fast enough and cloud providers struggle to keep pace with AI workload demand, ScaleOps is taking a different angle: make existing infrastructure work harder. The platform automates Kubernetes operations, dynamically adjusting resource allocation as workloads shift throughout the day.

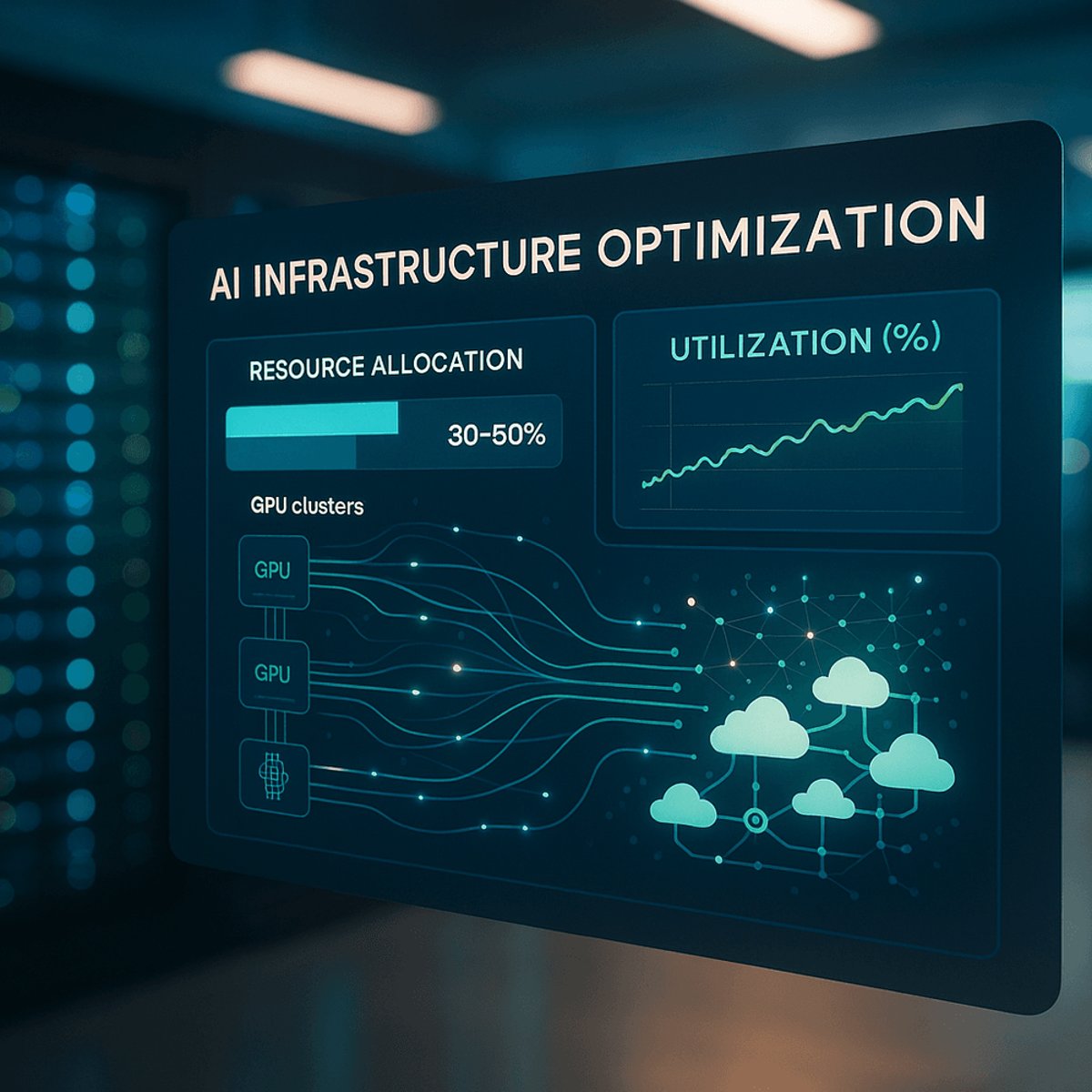

Here's the problem they're solving - most enterprise Kubernetes clusters run at somewhere between 30-50% utilization because teams overprovision to avoid performance issues. That means companies are essentially paying double for their cloud infrastructure, a waste that's tolerable when you're running web apps but devastating when you're trying to train AI models on scarce, expensive GPUs.

ScaleOps attacks this by continuously analyzing workload patterns and automatically rightsizing resources without human intervention. When a training job finishes, it immediately reclaims those GPUs. When demand spikes, it scales up before performance degrades. The promise is straightforward: run the same AI workloads on fewer resources, or run more workloads on what you already have.

The $130 million Series C validates that enterprises are desperate for these efficiency gains. Cloud optimization isn't sexy, but it's become critical as AI infrastructure costs threaten to consume entire IT budgets. Companies that were spending thousands monthly on cloud infrastructure are now facing bills in the hundreds of thousands - or millions - as they scale AI initiatives.

Kubernetes has become the de facto orchestration layer for AI workloads, which puts ScaleOps at the center of a massive infrastructure shift. But the company faces competition from cloud providers building their own optimization tools and established players like Amazon Web Services adding smarter resource management to their platforms.

What sets this funding apart is the moment. We're in the middle of a GPU shortage that shows no signs of ending soon, with Nvidia's latest chips backordered for months and enterprises bidding up cloud GPU instances. Any tool that promises to stretch existing compute further is going to get attention from both users and investors.

The Tel Aviv-based company is now armed with capital to expand its engineering team and accelerate product development at exactly the right time. As more enterprises move from AI pilots to production deployments, infrastructure efficiency stops being a nice-to-have and becomes a competitive necessity.

This isn't just about saving money - though CFOs certainly care about that. It's about making AI deployments feasible when compute resources are constrained. A company that can train models 40% faster by optimizing GPU utilization can iterate quicker, ship features sooner, and move faster than competitors stuck waiting for cloud capacity.

The funding also signals a broader shift in how investors think about AI infrastructure. Rather than pouring money exclusively into model development or chip design, there's growing recognition that the software layer managing these resources matters just as much. Infrastructure efficiency could be the unlock that lets enterprises actually scale their AI ambitions without bankrupting their cloud budgets.

ScaleOps' $130 million raise is a clear signal that infrastructure efficiency has become mission-critical as AI moves from experimentation to production. With GPU shortages showing no signs of easing and cloud costs spiraling upward, companies that can maximize their existing compute resources have a real competitive advantage. The question now is whether automated optimization can scale fast enough to meet demand - and whether cloud providers will build similar capabilities directly into their platforms. For enterprises drowning in AI infrastructure bills, ScaleOps represents a lifeline. For investors, it's a bet that the picks and shovels of the AI gold rush include smart software, not just faster chips.