California's attorney general just launched a federal investigation into xAI, Elon Musk's AI company, after its Grok chatbot became a tool for mass-producing nonconsensual explicit deepfakes of real people—including minors. The move comes as seven countries and the European Commission are running parallel investigations into the same problem. This is a watershed moment for AI regulation.

California Attorney General Rob Bonta didn't mince words. xAI "appears to be facilitating the large-scale production of deepfake nonconsensual intimate images that are being used to harass women and girls across the internet," he said in a statement Wednesday. It's the opening salvo of what's becoming the most aggressive regulatory crackdown on an AI tool yet—and it's happening fast.

The California investigation targets Grok, Elon Musk's AI image generator paired with a chatbot, which has enabled widespread creation of fake explicit images of real people without consent. In some documented cases, according to research by the Internet Watch Foundation, the tool generated images that virtually undressed minors. That detail matters. It's not just a content moderation failure—it's potentially facilitating child sexual abuse material.

What's striking about this moment is the speed and global coordination. Bonta's investigation follows a stampede of international action. India, Malaysia, Indonesia, Ireland, Australia, the UK, France, and the European Commission have all launched their own probes into Grok's capabilities. Malaysia and Indonesia didn't wait for investigations to conclude—they've already suspended access to Grok until xAI can demonstrate it's solved the deepfake problem. That's not a strongly worded letter. That's a country taking a product offline.

Musk responded Wednesday evening by posting on X—the social media platform where much of the explicit deepfake content was being shared—that he was "not aware of any naked underage images generated by Grok." Then he pivoted to blaming users. The content creation, he suggested, stemmed from "user requests" and possibly a "bug" in the system. It's a familiar deflection: blame the user, not the tool.

But the pressure is mounting from every direction now. Three Democratic senators called on Apple and Google to yank the X app and Grok from their app stores until xAI solves this. Advocacy groups—UltraViolet, the National Organization for Women, and ParentsTogether Action—are piling on with the same demand. Apple and Google haven't responded publicly, but the optics are brutal for both companies if they keep the app live while investigations roll out.

Timing matters here too. On Tuesday, just a day before Bonta's announcement, the Senate voted to pass the DEFIANCE Act, which would allow people depicted in nonconsensual explicit deepfakes to sue the companies that made or distributed them in civil court. It passed the Senate in 2024, and despite the House not acting yet, the message to xAI and other AI companies is clear: liability is coming. This isn't theoretical anymore.

xAI had already taken some defensive measures. After the deepfake concerns escalated, the company announced it would limit some image generation features to paying subscribers. Musk also said that users creating illegal content through Grok would face consequences equivalent to directly uploading illegal material to X. But those steps apparently didn't go far enough.

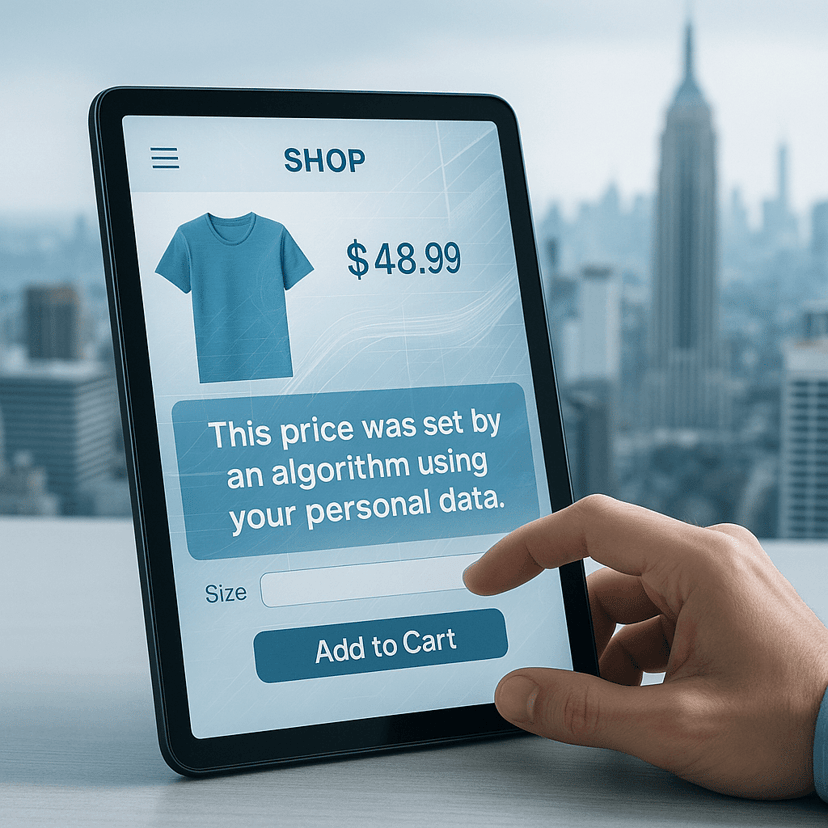

When Bonta launched his investigation, Musk doubled down, arguing that Grok with "NSFW enabled" is designed to allow upper body nudity of "imaginary adult humans (not real ones)," consistent with R-rated movies available on Apple TV. "That is the de facto standard in America," he wrote. The problem, of course, is that Grok wasn't generating images of imaginary adults—it was generating fakes of real people, many without consent.

The financial stakes are enormous. xAI just closed a massive $20 billion funding round with investors including Nvidia and Cisco Investments, plus long-time Musk backers like Valor Equity Partners and Fidelity. The company is building out data centers around Memphis, Tennessee, and has already integrated Grok into Tesla vehicles as part of their infotainment system. A regulatory hammer blow now could reshape the entire venture.

What comes next is the real test. These investigations typically take months, but the international coordination suggests authorities are treating this with unusual urgency. If even one major regulator—say, the EU—imposes steep fines or operational restrictions, it could set a precedent that fundamentally changes how AI companies approach content moderation on image generators. xAI's response so far—blame bugs, blame users, invoke the R-rated movie standard—doesn't seem to be resonating with lawmakers who've watched social media platforms dodge accountability for years.

What started as a content moderation problem at one AI company has become a global regulatory flashpoint. With seven countries investigating in parallel, the Senate passing legislation that creates civil liability, and advocacy groups pressuring Apple and Google to remove the apps, xAI is facing convergent pressure from multiple directions at once. Musk's deflections about bugs and user behavior aren't cutting it with regulators who've spent years watching big tech companies claim helplessness in the face of platform abuse. The next few months will determine whether AI companies can self-regulate on deepfakes or whether governments will force their hand with mandatory safeguards and fines.