As AI-generated misinformation floods social media following recent Middle East military strikes, major newsrooms are pulling back the curtain on how they separate fact from synthetic fiction. Organizations like The New York Times, Bellingcat, and Indicator are sharing their deepfake detection playbooks with the public, offering a rare glimpse into the verification gauntlet that content faces before publication. The timing isn't coincidental - fabricated war footage is spreading faster than newsrooms can debunk it.

The misinformation factory kicked into overdrive this weekend. Following the joint US-Israel military operation in Iran, social media erupted with supposed evidence of the conflict. But something's off. Videos showing collapsing landmarks turn out to be AI-generated fakes. Dramatic combat footage? That's actually from War Thunder, a military simulation game. Old conflicts get repackaged as breaking news.

With synthetic media capabilities democratized by AI tools, verification teams at elite newsrooms have become the internet's fact-checking frontline. Now they're sharing their methods with anyone who'll listen. The New York Times visual investigations team, Bellingcat's open-source intelligence analysts, and digital verification startup Indicator are publishing their deepfake detection workflows - treating media literacy like open-source code.

The verification process isn't magic, it's methodical detective work. According to reporting from The Verge, newsrooms start with reverse image searches across multiple platforms to trace content origins. They analyze metadata for manipulation signatures, cross-reference timestamps with known events, and use geolocation tools to verify claimed locations match visual evidence in frames.

But AI-generated content presents unique challenges that traditional fact-checking wasn't built for. Unlike photoshopped images with telltale clone stamp artifacts, modern generative AI creates novel content from scratch. Verification teams now look for inconsistencies in physics - shadows falling at impossible angles, reflections that don't match surroundings, or anatomical anomalies in human subjects that betray algorithmic origins.

Bellingcat has pioneered crowdsourced verification at scale, turning online communities into distributed fact-checking networks. Their investigators combine satellite imagery, weather data, architectural databases, and linguistic analysis to build comprehensive authenticity profiles for contested media. When a video claims to show a specific location at a particular time, dozens of data points either corroborate or contradict that claim.

The stakes extend beyond war coverage. Synthetic media has infiltrated everything from financial markets to political campaigns. A single AI-generated image of a nonexistent event can move stock prices before verification teams sound the alarm. Election cycles now contend with fabricated candidate videos that look increasingly convincing to untrained eyes.

What's emerging is a two-tier information ecosystem. Audiences with access to verification tools and literacy can navigate synthetic media landscapes. Those without these skills become vulnerable to manipulation at industrial scale. Major newsrooms recognize this gap - hence the transparency offensive.

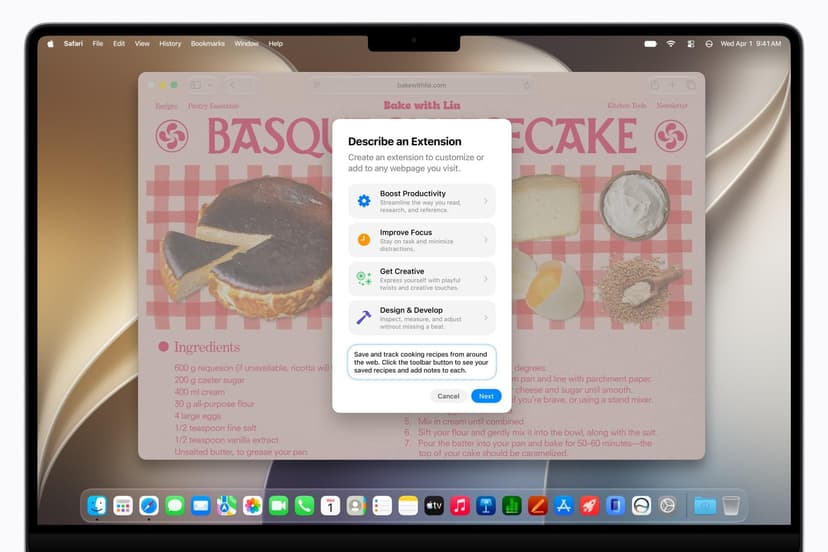

The New York Times has published detailed explainers on spotting AI artifacts, while Indicator offers browser extensions that flag potentially synthetic content in real-time. The approach treats verification as a public good rather than proprietary newsroom advantage.

But the arms race continues. As detection methods improve, so do generation techniques. OpenAI and other AI labs face mounting pressure to watermark synthetic content at creation, though implementation remains inconsistent across platforms. Some researchers advocate for cryptographic authentication systems where cameras embed unforgeable signatures proving content provenance.

The immediate challenge is volume. Verification teams can forensically examine dozens of images daily. Social platforms host millions. Even with AI-assisted detection tools, human judgment remains essential for contextual analysis that algorithms miss. A real photo from 2015 presented as evidence from 2026 requires understanding narrative context, not just technical authentication.

Newsrooms are also confronting their own AI use. Several publications now deploy generative tools for graphics and illustration work, raising questions about disclosure standards. The line between enhancement and fabrication blurs when AI fills in missing image details or generates representative visuals for abstract concepts.

What separates professional verification from casual fact-checking is systematic rigor. Newsrooms maintain detailed documentation of their investigative process, allowing others to reproduce findings. They publish methodology alongside conclusions. When verification fails to definitively prove or disprove content authenticity, they say so rather than speculating.

The public education push reflects a sobering reality - verification can't scale to meet misinformation's production capacity. Empowering audiences to apply basic authentication checks before sharing creates distributed resistance to synthetic media floods. It's not a complete solution, but it's the available one.

The deepfake detection playbook major newsrooms are sharing represents both a practical toolkit and an admission that traditional gatekeeping can't contain synthetic media at scale. As AI-generated content becomes indistinguishable from authentic footage, the burden of verification shifts from centralized fact-checkers to distributed audiences armed with basic authentication skills. This democratization of verification methods might be the only realistic defense against misinformation that spreads faster than any newsroom can debunk it. The question isn't whether AI will continue generating convincing fakes - it will. What matters now is whether media literacy can spread as quickly as the fabrications it's meant to combat.