X just rolled out what looks like a privacy win for users worried about AI manipulation of their photos - but the fine print tells a different story. The platform's new toggle to "block modifications by Grok" doesn't actually prevent xAI's chatbot from editing your images. According to testing by The Verge and Social Media Today, the feature only stops one specific interaction method, leaving users' photos just as vulnerable to AI manipulation as before. It's a reminder that in the age of generative AI, reading the terms and conditions matters more than ever.

X is trying to give users more control over how xAI's Grok chatbot interacts with their photos - or at least that's what the new feature promises. But anyone hoping for real protection from AI image manipulation should read the fine print carefully.

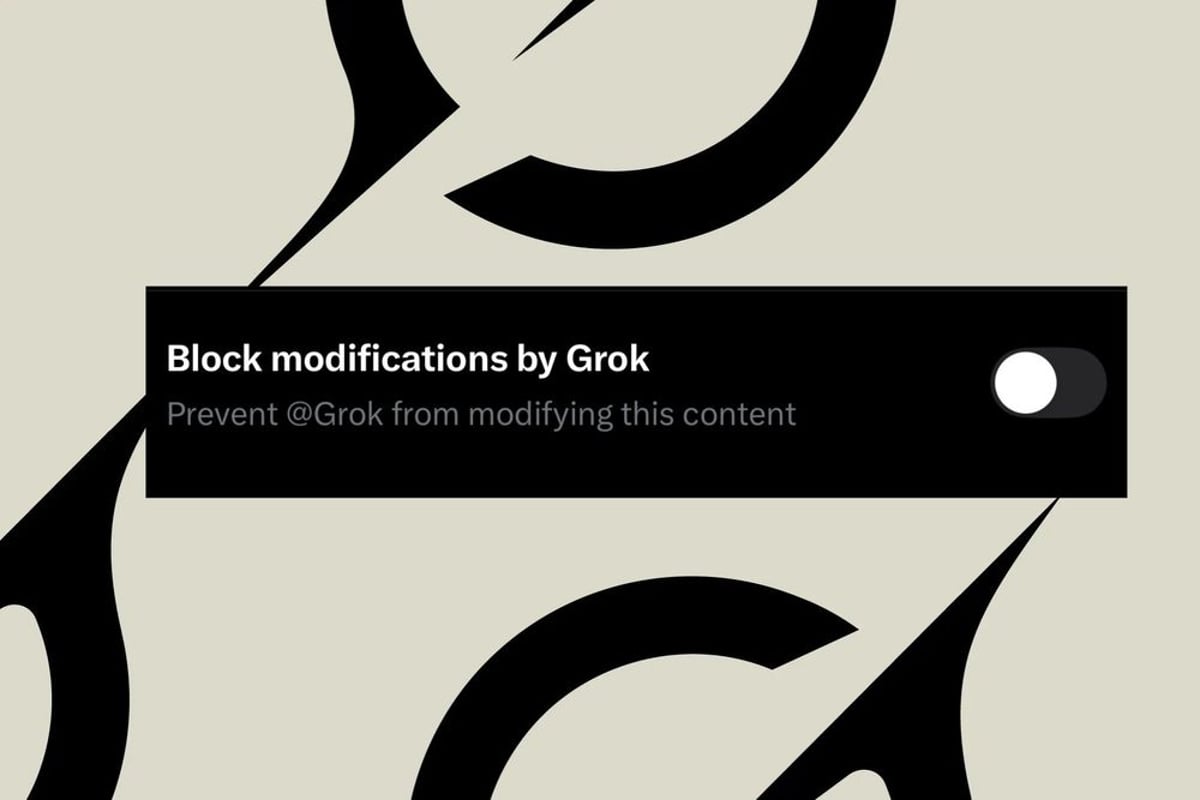

The toggle, spotted in the X iOS app's image upload settings, claims it can "block modifications by Grok" when enabled. First reported by Social Media Today and confirmed by The Verge, the feature sounds like a meaningful privacy control at first glance. But testing reveals it's far more limited than the name suggests.

Here's what the toggle actually does: it prevents other users from tagging @Grok in replies to your images. That's it. The small text underneath the feature name quietly admits users can only "prevent @Grok from modifying this content" through that specific mechanism. Any other method of feeding your photos into Grok's AI editing capabilities? Still fair game.

The distinction matters because Grok has raised serious concerns about deepfakes and image manipulation on social media. Users can still screenshot your images, download them, or use other methods to process them through xAI's chatbot. The toggle creates an illusion of protection while leaving the barn door wide open.

This isn't the first time a social platform has introduced AI controls that sound more protective than they actually are. But X's implementation feels particularly misleading given the straightforward promise in the feature's name. When users see "block modifications by Grok," they reasonably expect comprehensive protection - not a single interaction pathway being closed off.

The timing is notable too. As generative AI tools become more sophisticated at creating convincing fake images, platforms face mounting pressure to give users meaningful control over their content. X's response appears to split the difference between doing something and doing nothing, landing in an awkward middle ground that may frustrate users who discover the limitations after enabling the toggle.

For xAI, which Elon Musk founded as a competitor to OpenAI, the Grok chatbot represents a key differentiator for X's premium subscribers. The AI can generate and edit images with impressive capabilities - which is exactly what worries privacy advocates. Balancing those features with user consent has proven tricky.

What makes this particularly frustrating is that X clearly understands users want protection from unwanted AI manipulation. The company built the toggle interface, wrote the feature description, and pushed it to the iOS app. They just didn't follow through with comprehensive functionality to match the promise.

Users enabling the feature might feel a false sense of security, assuming their photos are now protected from Grok's editing capabilities. In reality, they've only blocked one narrow use case while the broader vulnerability remains. It's the digital equivalent of locking your front door while leaving all the windows open.

The feature's limitations also raise questions about X's product development priorities. Was this always meant to be a limited control, with the misleading name an oversight? Or did technical or business considerations prevent a more comprehensive implementation? Either way, the disconnect between marketing and reality doesn't inspire confidence.

For now, users concerned about AI manipulation of their photos on X have limited options. They can enable the toggle for whatever marginal protection it provides against @Grok tagging. They can watermark images before uploading. Or they can reconsider sharing sensitive photos on the platform entirely.

The situation reflects a broader challenge across social media as AI tools proliferate. Platforms want to offer cutting-edge AI features that drive engagement and subscriptions. But those same capabilities create privacy and consent issues that simple toggles can't fully address. X's half-measure of a solution highlights just how difficult that balance has become.

X's new Grok blocking feature is a masterclass in misleading product design - promising comprehensive protection while delivering only a narrow restriction on one interaction method. For users increasingly concerned about AI manipulation of their images, this half-measure offers little real security while potentially creating dangerous complacency. The episode serves as a reminder that as AI capabilities expand across social platforms, user controls need to be both transparent and comprehensive. Until X delivers actual protection that matches its marketing promises, anyone worried about their photos being fed into Grok should assume the chatbot can still access them.